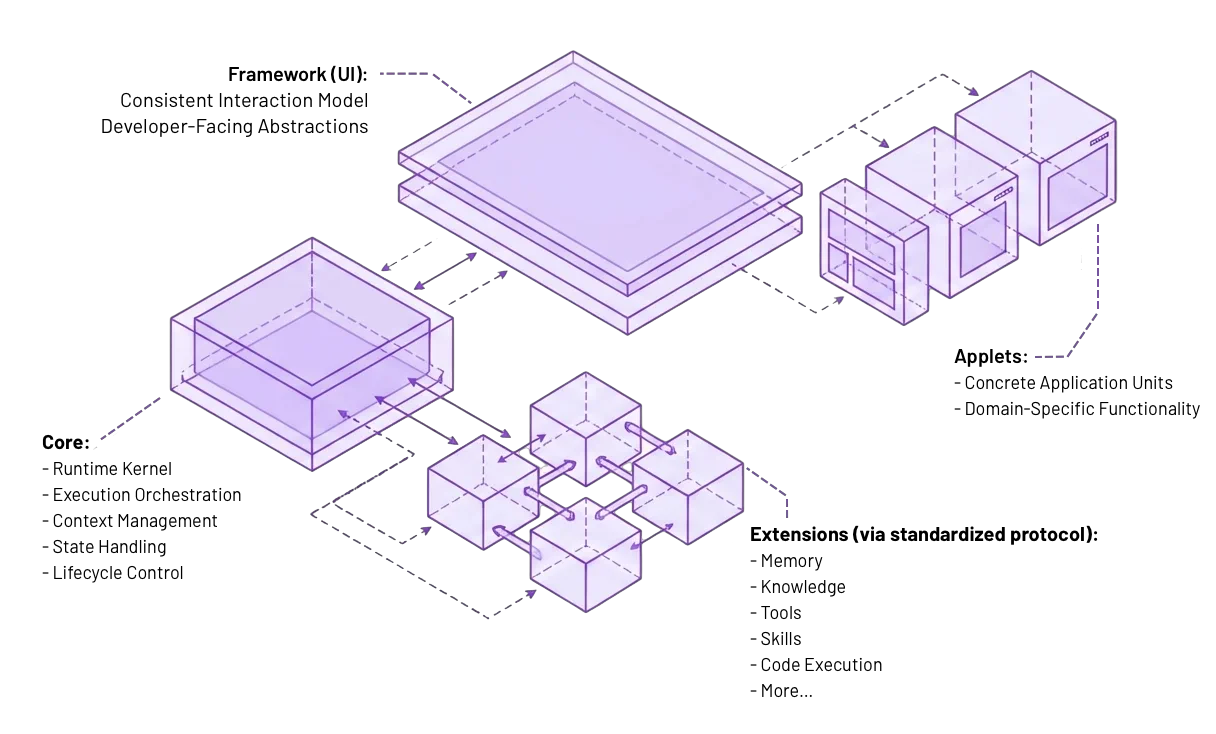

A modular runtime for AI-native applications

Kairo is a production-grade, open-source runtime for AI-native applications in TypeScript. It orchestrates pluggable runtime capabilities through a standardized extension model — enabling modular, provider-agnostic systems with clear separation of concerns.

Why Kairo?

Future-Proof Protocol

Built on a standardized JSON-RPC protocol. Your components and extensions remain compatible as the ecosystem evolves.

No Vendor Lock-in

Seamlessly switch between OpenAI, Anthropic, or local models. Your application logic remains untouched no matter which model you choose.

Rock-Solid Stability

Extensions run in isolated processes. A failing plugin won't bring down your application, making scaling and debugging a breeze.

Real-Time Experiences

Deliver blazing-fast, streaming responses to your users out of the box. Intercept and transform text streams effortlessly.

Ultimate Flexibility

Snap together memory retrieval, tool execution, and data validation like Lego blocks. Enable only what you need, when you need it.

Built for Scale

Unified Model Access

Interact with any LLM through a single, consistent interface. Forget about maintaining different SDKs and API quirks.

Intelligent Orchestration

The lightweight core engine handles the complex lifecycle of AI agents—from message routing to tool execution—automatically.

Limitless Extensibility

Easily add custom capabilities like database access or third-party APIs through secure, plug-and-play extensions.

Minimal Core. Infinite Extensions

Kairo keeps the core intentionally small, built around a simple pipeline that coordinates each interaction with a language model. Capabilities such as Skills, MCP, and memory are added through extensions that plug into this pipeline

As your application runs, these extensions can participate in the process whenever they’re needed—keeping the runtime clean while allowing agents to grow more capable over time.

Start building todayconst extension = new LMPipelineExtensionClient({ name: "mcp-extension" });

const transport = new ExtensionTransportStdio({

command: "pnpm",

args: ["tsx", "mcp-extension.ts"],

})

await extension.connect(transport);

const provider = new OpenaiProvider({

apiKey: process.env.OPENAI_API_KEY!,

});

const stream = new LMPipeline({

model: provider.defaultModel(),

messages: [

{ role: "user", content: "Check BTC and ETH prices and summarize market sentiment." }

],

extensions: [extension],

}).run();